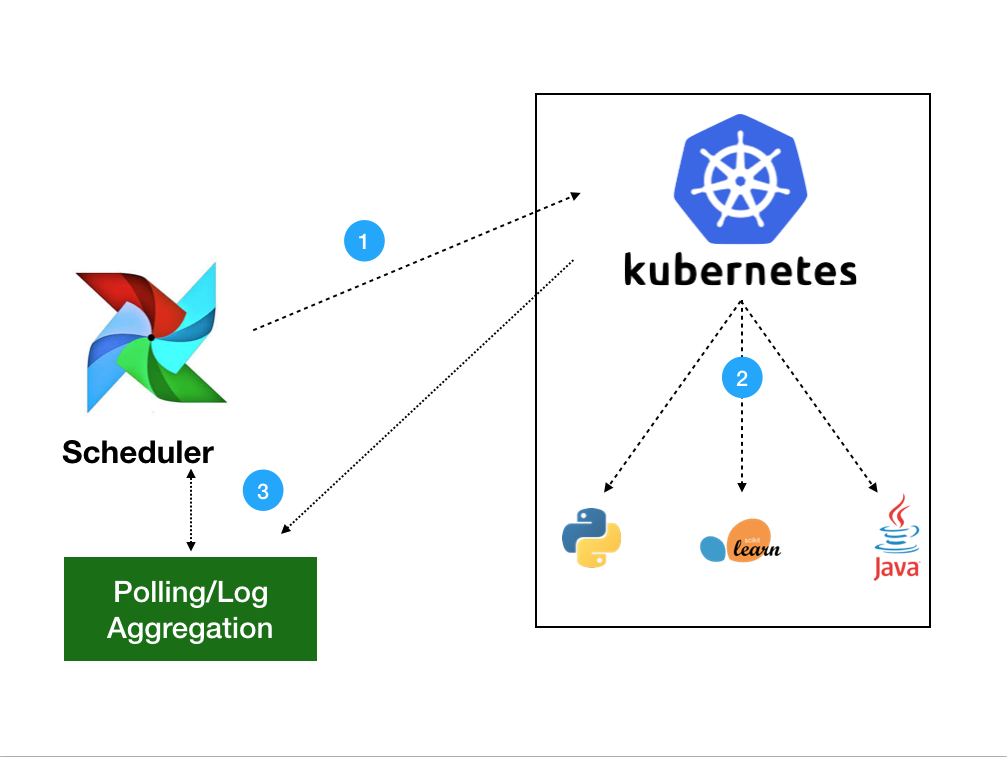

Role for spark-on-k8s-operator to create resources on cluster apiVersion. For instance - this is an entirely valid Operator that will use the put_your_image_here image as the base. Kubernetes cluster using SparkKubernetesOperator from Airflow DAG-docker. Such information might otherwise be put in a Pod specification or in an image. However - you’re not forced to use the same image as your scheduler and webserver - you can inject whatever image you want - as long as airflow is installed on the image. import datetime from airflow import models from import secret from import kubernetespodoperator A Secret is an object that contains a small amount of sensitive data such as a password, a token, or a key. You can have this be the same image as you run your scheduler with - in this case, the default docker image you run is the same between the workers (that run tasks), the scheduler, and the webserver. When the worker pod runs, a new Airflow instance is instantiated and the code that is being asked (whether shell, python, sql, etc.) is run on the worker Airflow Webserver instance as a LocalExecutor. Pod Mutation Hook The Airflow local settings file ( airflowlocalsettings. Airflow has a concept of operators, which represent Airflow tasks.In your example PythonOperator is used, which simply executes Python code and most probably not the one you are interested in, unless you submit Spark job within Python code. Airflow on Kubernetes (Part 1): A Different Kind of Operator kubernets. The KubernetesPodOperator allows you to create Pods on Kubernetes. This process is faster to execute and easier to modify. Kubernetes executor vs kubernetespodoperator airflow - Pod Pod. I.e., if the task is listed as running, but no Kubernetes pod is running, killing the task and rerunning a new kubernetes pod. As of Airflow 1.10.12, you can now use the podtemplatefile option in the kubernetes section of the airflow.cfg file to form the basis of your KubernetesExecutor pods.

This alleviates the pressure on the main scheduler / webserver instance since the main scheduler is only required to check that a pod was created and sanity checks. The task pod will communicate directly with the SQL backend for your Airflow instance and manage updating the status of the SQL backend’s results. With this - each and every task that gets generated by the Executor creates a new Kubernetes pod with the image you specify running a seperate Airflow instance with the same SQL backend for results - the task is then ran in the Webserver of the spawned pod. Operator needs to be cleaned up, or it will leave ghost processes behind.Worker_container_repository = /your_image Override this method to clean up subprocesses when a task instance gets killed.Īny use of the threading, subprocess or multiprocessing module within an The tasks can scale using spark master support made available in spark 2. Hey Im trying to run my code as in your example, but Kubernetes operator (GKEPodOperator in my. 3-kubernetes-pod-operator-spark: Execute Spark tasks against Kubernetes Cluster using KubernetesPodOperator. Refer to get_template_context for more context. Failed to extract xcom from airflow pod - Stack Overflow. This is the main method to derive when creating an operator.Ĭontext is the same dictionary used as when rendering jinja templates. All classes for this provider package are in python package. Template_fields : Sequence = ('application_file', 'namespace') ¶ template_ext : Sequence = ('.yaml', '.yml', '.json') ¶ ui_color = '#f4a460' ¶ execute ( context ) ¶ Watch ( bool) – whether to watch the job status and logs or not Kubernetes_conn_id ( str) – The kubernetes connection idĪpi_group ( str) – kubernetes api group of sparkApplicationĪpi_version ( str) – kubernetes api version of sparkApplication Namespace ( str | None) – kubernetes namespace to put sparkApplication By using Airflow, it is possible to develop a DAG (Directed Acyclic Graph) type of workflow, and it is also possible to develop an expandable pipeline by connecting the DAG. Airflow is a platform for developing, scheduling and monitoring workflows programmatically. As per today,there is an ongoing issue with Airflow 1.10.2,the issue reported description is : Related to this, when we manually fail a task, the DAG task stops running, but the Pod in the DAG does not get killed and. Airflow cluster setup for multi-tenancy and Kubernetes operator. Path to a ‘.yaml’ file, ‘.json’ file, YAML string or JSON string. In addition, The timeout is only enforced for scheduled DagRuns, and only once the number of active DagRuns maxactiveruns. For more detail about Spark Application Object have a look at the reference:Īpplication_file ( str) – Defines Kubernetes ‘custom_resource_definition’ of ‘sparkApplication’ as either a

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed